As design lead on AlgoBuilder, an AI-powered fintech product, I owned the UX strategy, user flows, and interaction design across the full Build → Code → Backtest → Results pipeline. Taking traders from a plain English idea to downloadable, production-ready trading code they trust enough to run live.

Problem

Most retail traders have strategy ideas but can't code. Hiring freelancers is expensive, buying pre-built EAs (Expert Advisors) requires blind trust, and learning MQL5 takes weeks.

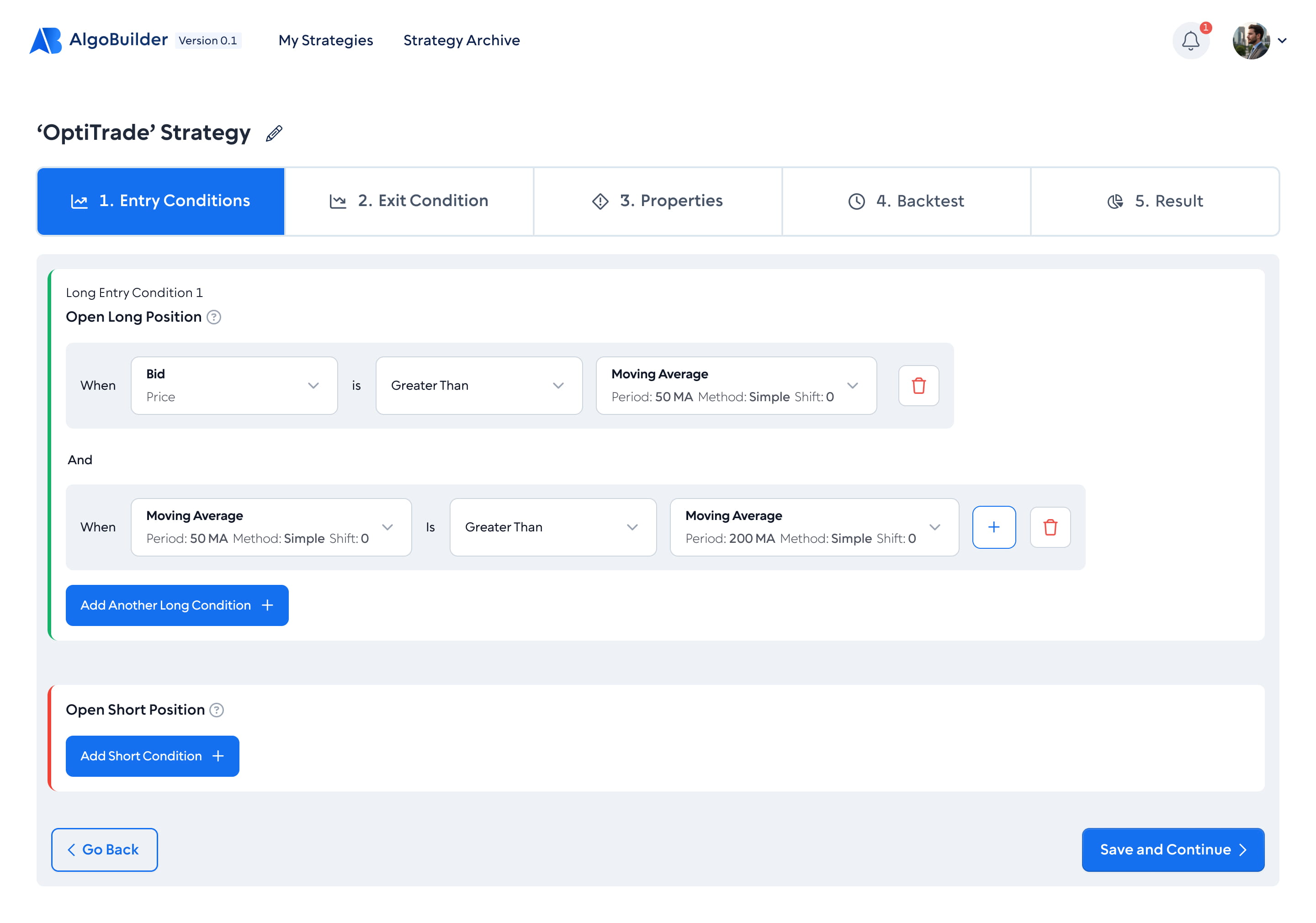

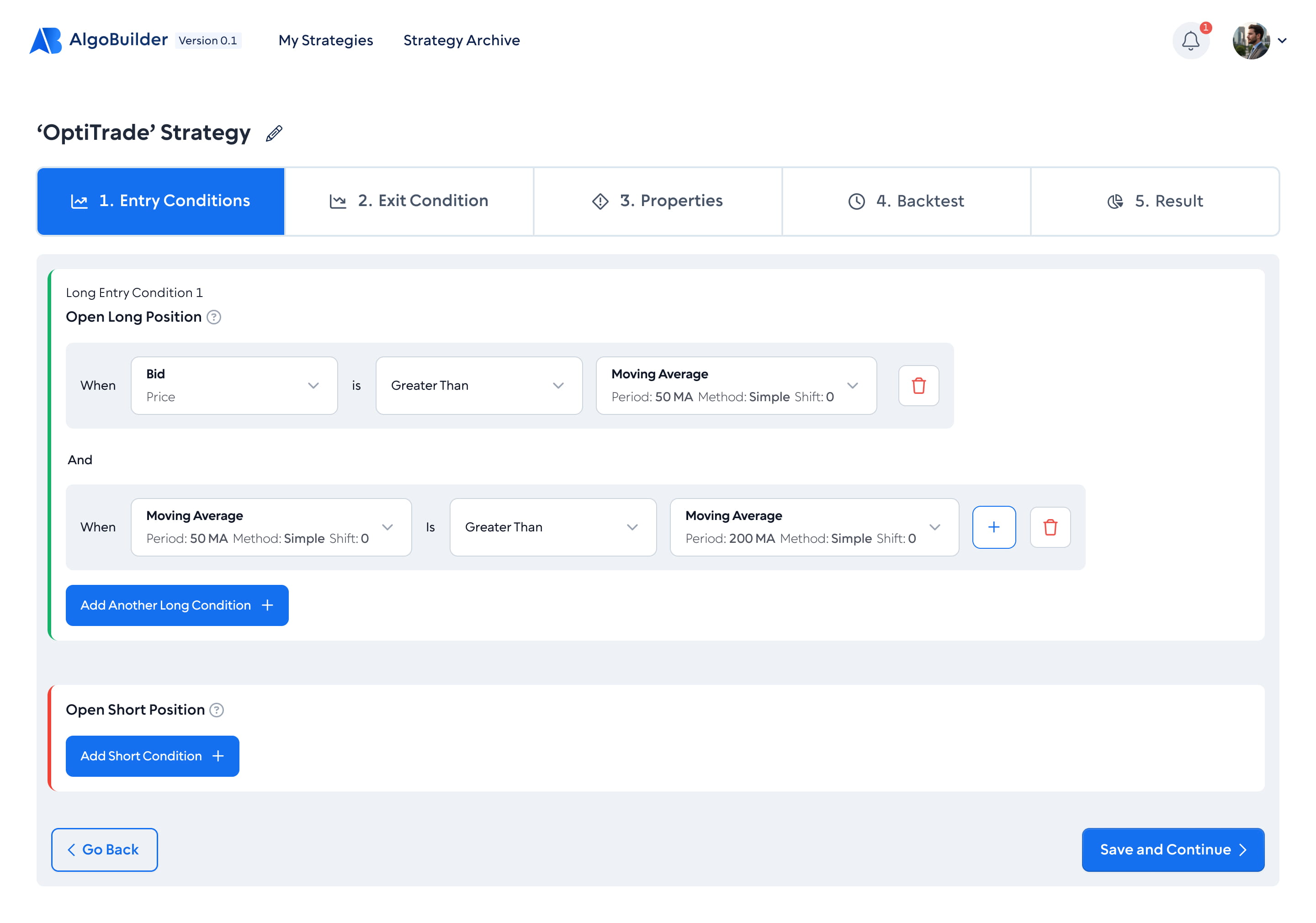

Milestone 1 tried a form-based approach: dropdowns, condition rows, Boolean operators. It reduced the barrier, but still required users to think in technical abstractions.

Reviewing usage data and support conversations from Milestone 1 surfaced a consistent pattern: users who completed strategy setup rarely moved on to backtest, and most abandoned around indicator configuration. The form modelled the code, not the way a trader thinks.

Key Decision

Trust in AI-generated code is a UX problem. I designed a four-tab flow where each step reduces uncertainty and builds the confidence needed to take the next one.

Step 1

The first barrier is getting started. No forms, no dropdowns.

Step 2

In Milestone 1, users couldn't see the code being generated. They were expected to download a file and run it on a live trading account without knowing what was inside. That's a big ask when real money is on the line. I redesigned this step to show the full MQL5 source as it generates, line by line. No black box, no leap of faith.

Step 3

The gap between "built" and "tested" was where users dropped off. They'd complete a strategy but never backtest it - the cognitive cost of configuring a separate test was too high. So backtesting lives one click away, inside the same flow.

Step 4

Numbers alone didn't create confidence. Even after good backtest metrics, traders wanted to watch the strategy execute on real price data before trusting it with real money. The results step gives them everything they need to make that decision.

Designing for the model, not around it

Natural language is ambiguous and LLMs hallucinate. In a fintech product where the output runs on real money, the UI has to assume the model will sometimes misinterpret intent or generate broken code. Three design decisions handle that directly.

When the prompt is underspecified, the AI asks a follow-up question instead of guessing. The strategy summary stays empty until the user confirms — no silent assumptions baked into code.

The strategy summary on the right of the Build screen is the AI's interpretation made visible and editable. Users can correct a misread rule before a single line of MQL5 is written.

Generated code runs through a syntax check before the "Run backtest" button becomes active. If it fails, the error surfaces inline and the user is routed back to refine the prompt — the flow never hands off a broken EA.

Beyond the product flow, I led the brand identity and built the design system from the ground up on atomic design methodology, with tokens, components, and patterns structured to scale. The result: a cohesive visual language the product shipped with from day one.